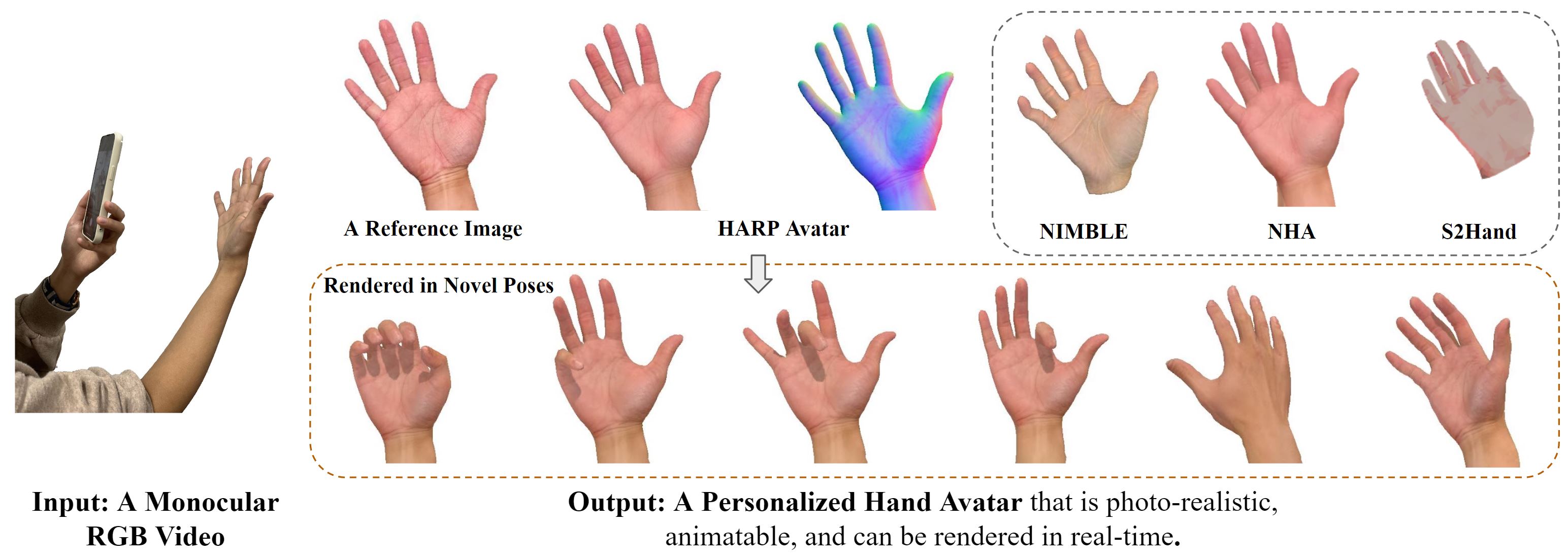

We present HARP (HAnd Reconstruction and Personalization), a personalized hand avatar creation approach that takes a short monocular RGB video of a human hand as input and reconstructs a faithful hand avatar exhibiting a high-fidelity appearance and geometry.

In contrast to the major trend of neural implicit representations, HARP models a hand with a mesh-based parametric hand model, a vertex displacement map, a normal map, and an albedo without any neural components.

As validated by our experiments, the explicit nature of our representation enables a truly scalable, robust, and efficient approach to hand avatar creation. HARP is optimized via gradient descent from a short sequence captured by a hand-held mobile phone and can be directly used in AR/VR applications with real-time rendering capability.

To enable this, we carefully design and implement a shadow-aware differentiable rendering scheme that is robust to high degree articulations and self-shadowing regularly present in hand motion sequences, as well as challenging lighting conditions. It also generalizes to unseen poses and novel viewpoints, producing photo-realistic renderings of hand animations performing highly-articulated motions. Furthermore, the learned HARP representation can be used for improving 3D hand pose estimation quality in challenging viewpoints. The key advantages of HARP are validated by the in-depth analyses on appearance reconstruction, novel-view and novel pose synthesis, and 3D hand pose refinement. It is an AR/VR-ready personalized hand representation that shows superior fidelity and scalability.

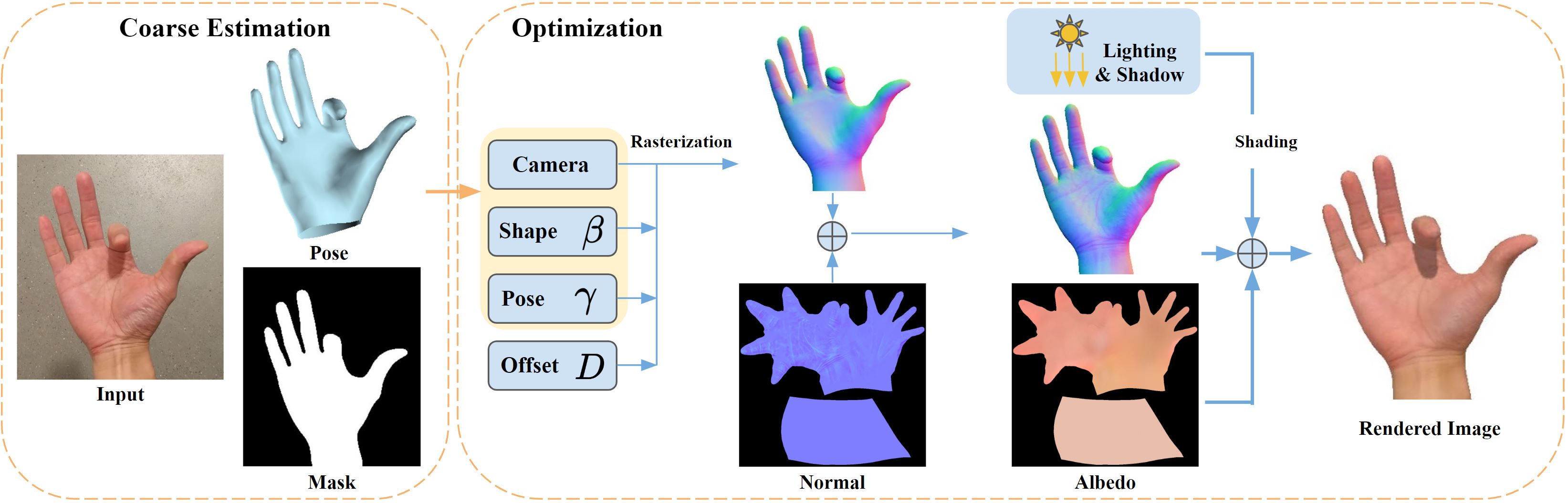

Given a short monocular RGB video of a hand, our hand avatar creation method includes two steps: (1) coarse hand pose and shape estimation for initialization; (2) an optimization framework to reconstruct the personalized hand geometry and appearance. The hand geometry is first rasterized and combined with a normal map. Then, the shader combines the albedo, geometry, and lighting to render the personalized hand. The optimization solves the hand and scene parameters using only the input images.

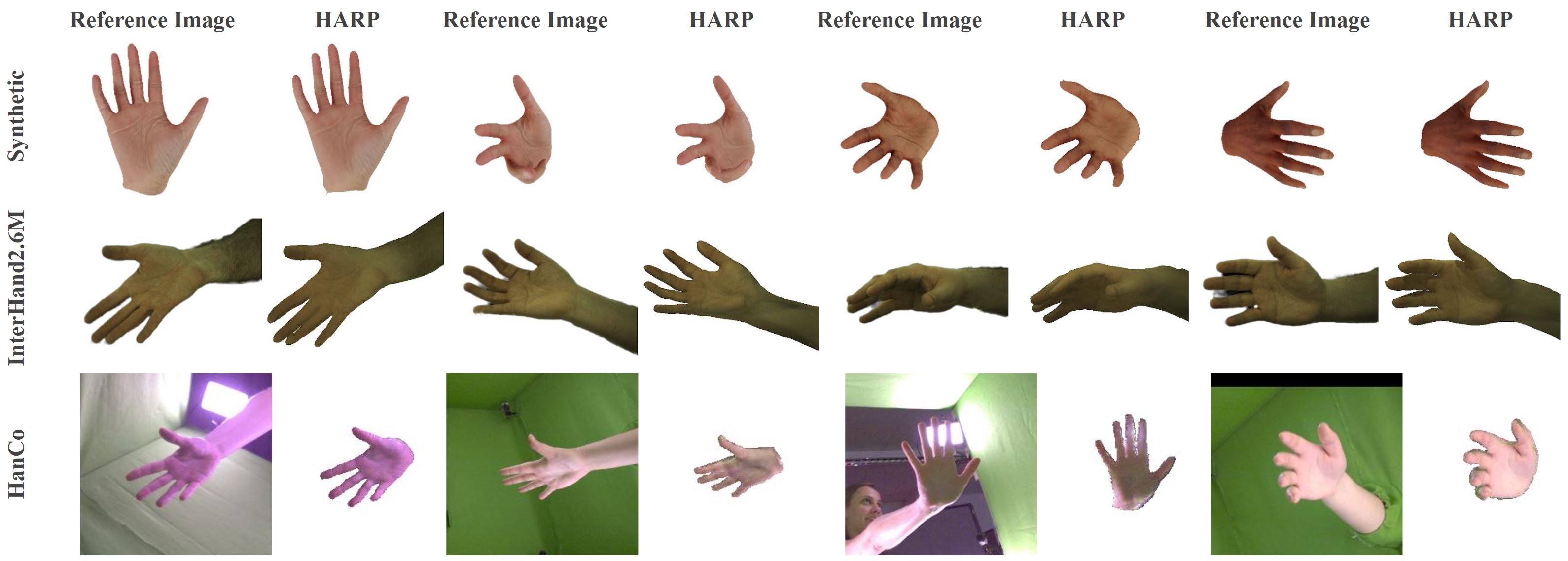

Given a short video, HARP can create an avatar from different capturing scenarios.

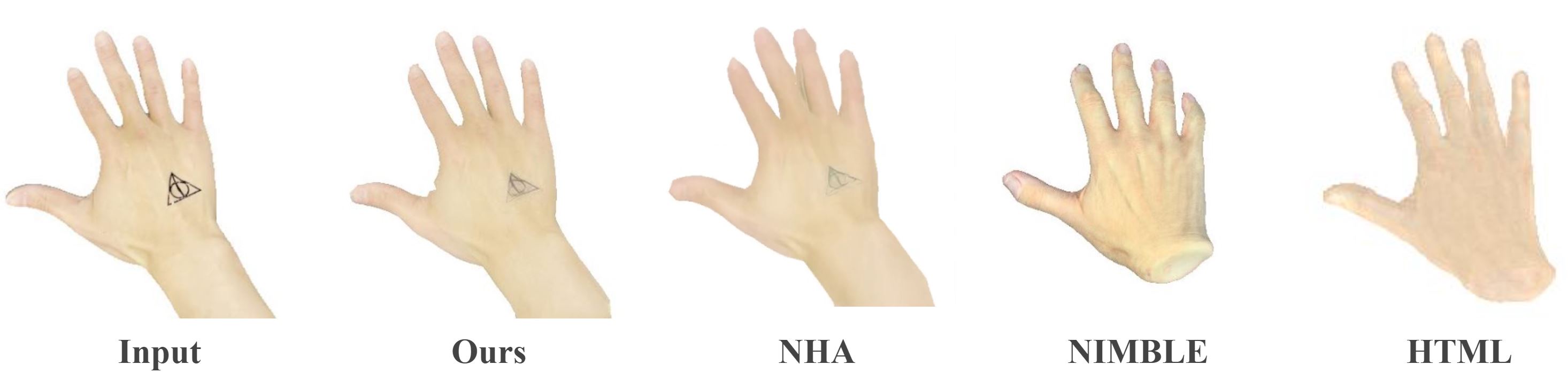

HARP works without modification for out-of-distribution appearance that cannot be captured by parametric models.

@article{karunratanakul2022harp,

author = {Karunratanakul, Korrawe and Prokudin, Sergey and Hilliges, Otmar and Tang, Siyu},

title = {HARP: Personalized Hand Reconstruction from a Monocular RGB Video},

journal = {arXiv preprint arXiv:2212.09530},

year = {2022},

}